Simplifying English Learning Through AI

An AI-powered platform that combines intelligent vocabulary tools, voice-based tutoring, and community for learners across Southeast Asia who need more than flashcards.

Client

Peilin — Founder, Melon Labs

Scope

Product Strategy

AI Engineering

Mobile Development

Community Infrastructure

My Role

Led product and engineering across five surfaces: strategy, AI architecture, mobile, and community infrastructure. From zero to shipped.

A vibe-coded product that had already gone further than most

The founder had already built a working English dictionary app on her own. But vibe-coded products hit a ceiling: the design didn't feel credible, the AI-generated definitions couldn't be trusted because of hallucinations, and there was no path from prototype to something people would pay for.

She came to us with two clear needs: make the product real enough to generate revenue, and build a community around English learning that would become her retention engine. The product instinct was sharp. The gap was between what she'd built and what the market would trust.

Three layers, one connected product

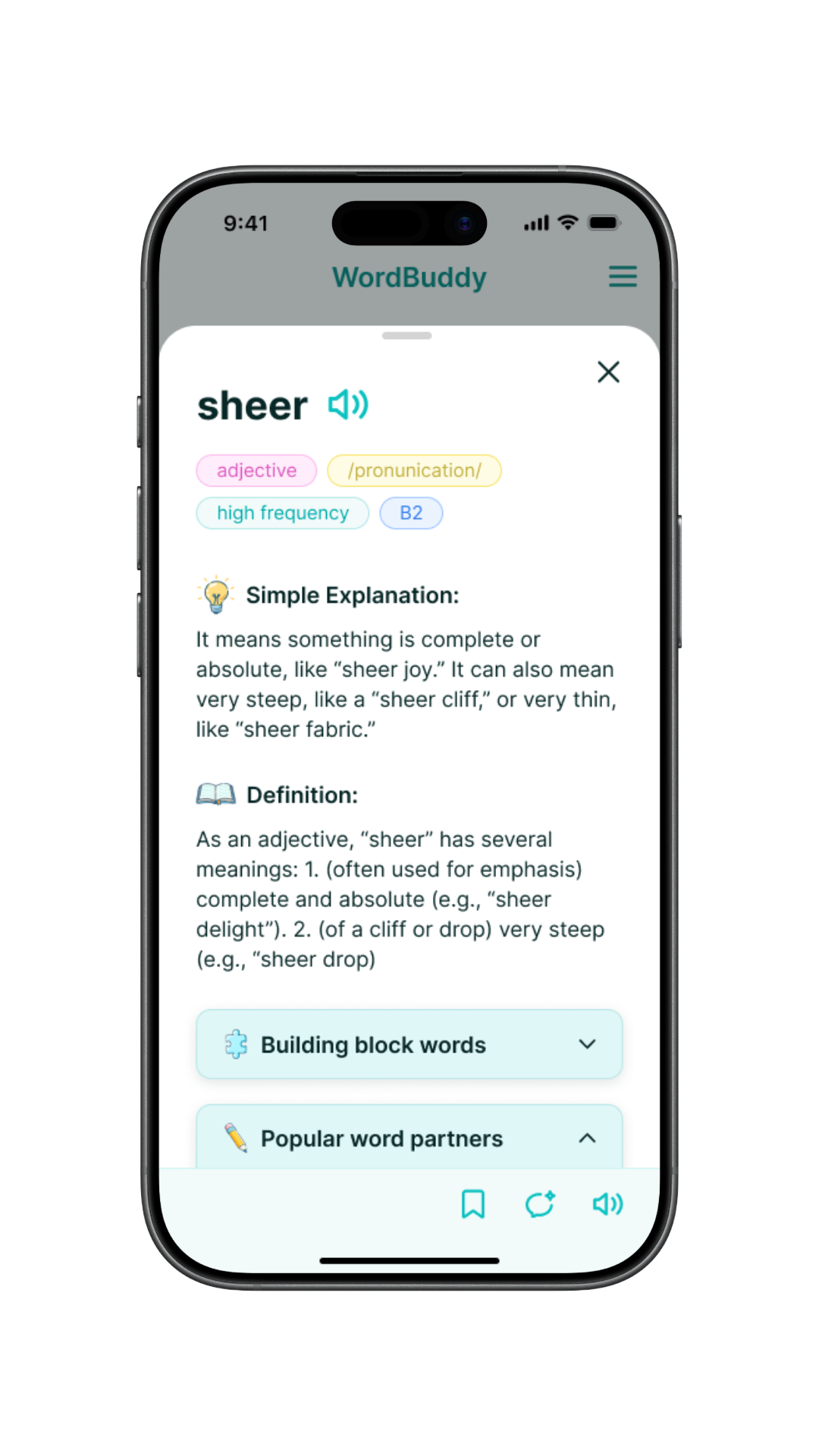

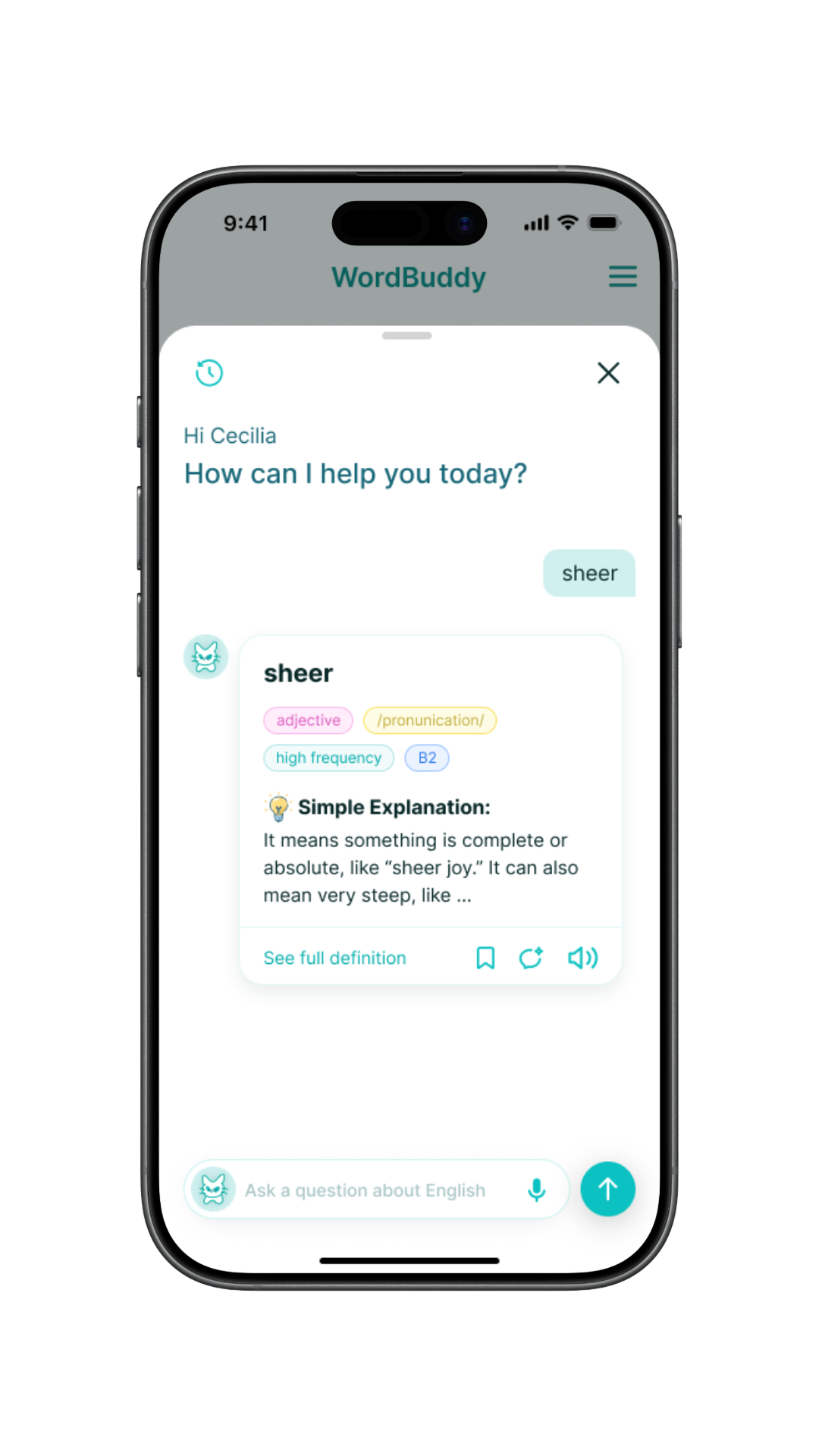

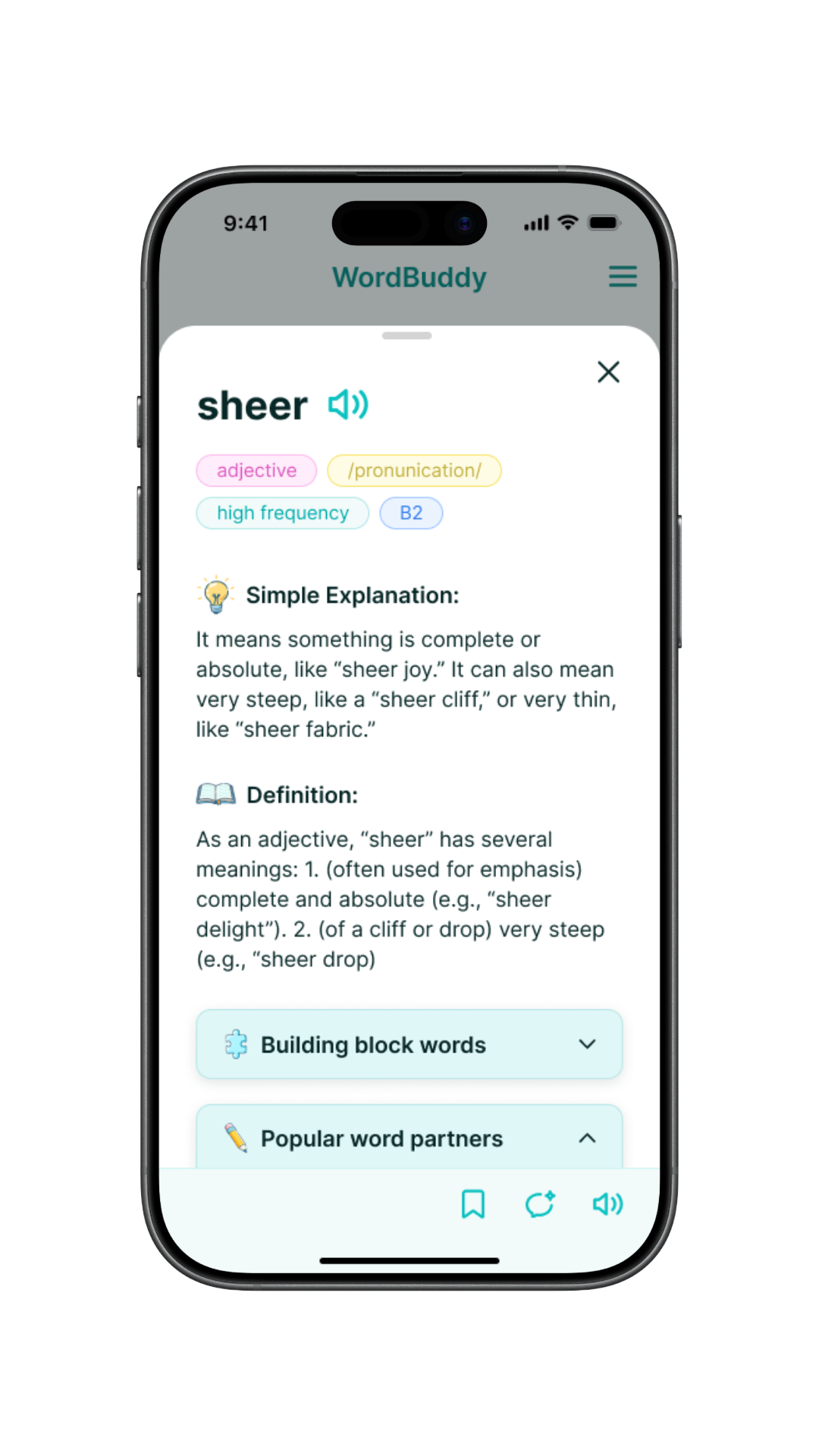

AI Dictionary

LLM-powered definitions with confidence scoring, tappable words inside definitions, and multi-purpose chat covering vocabulary, idioms, grammar, and translation in one interface.

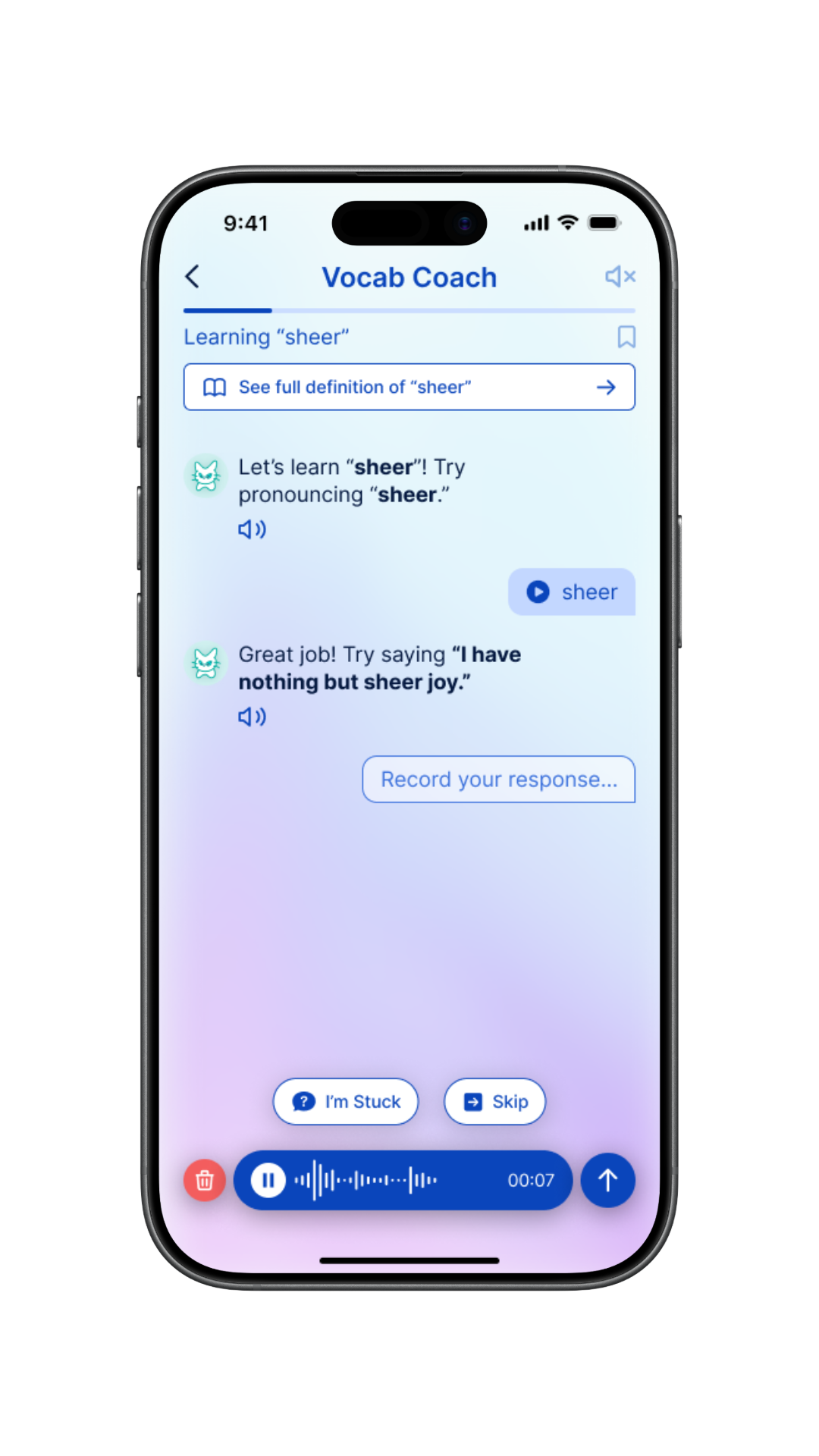

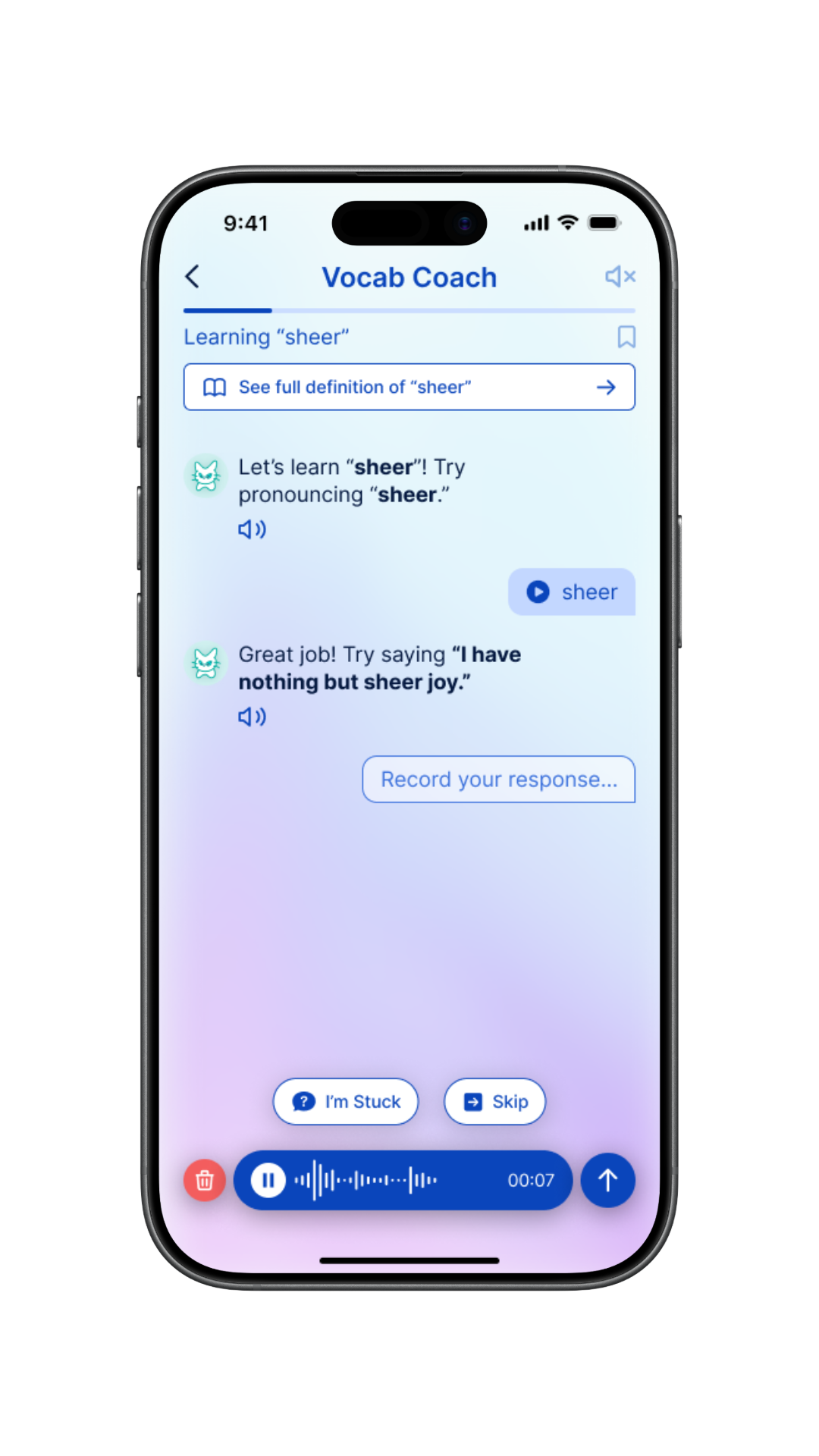

Voice AI Tutor

Guided conversation sessions with voice-only input by design. Multiple modes: vocabulary practice, debate, Q&A, and idiom role-play. One backend, many experiences.

Community

Where learners validate AI answers with real tutors. Integrated with Heartbeat, Stripe + Xendit for SEA payments, live workshops, and membership tiers.

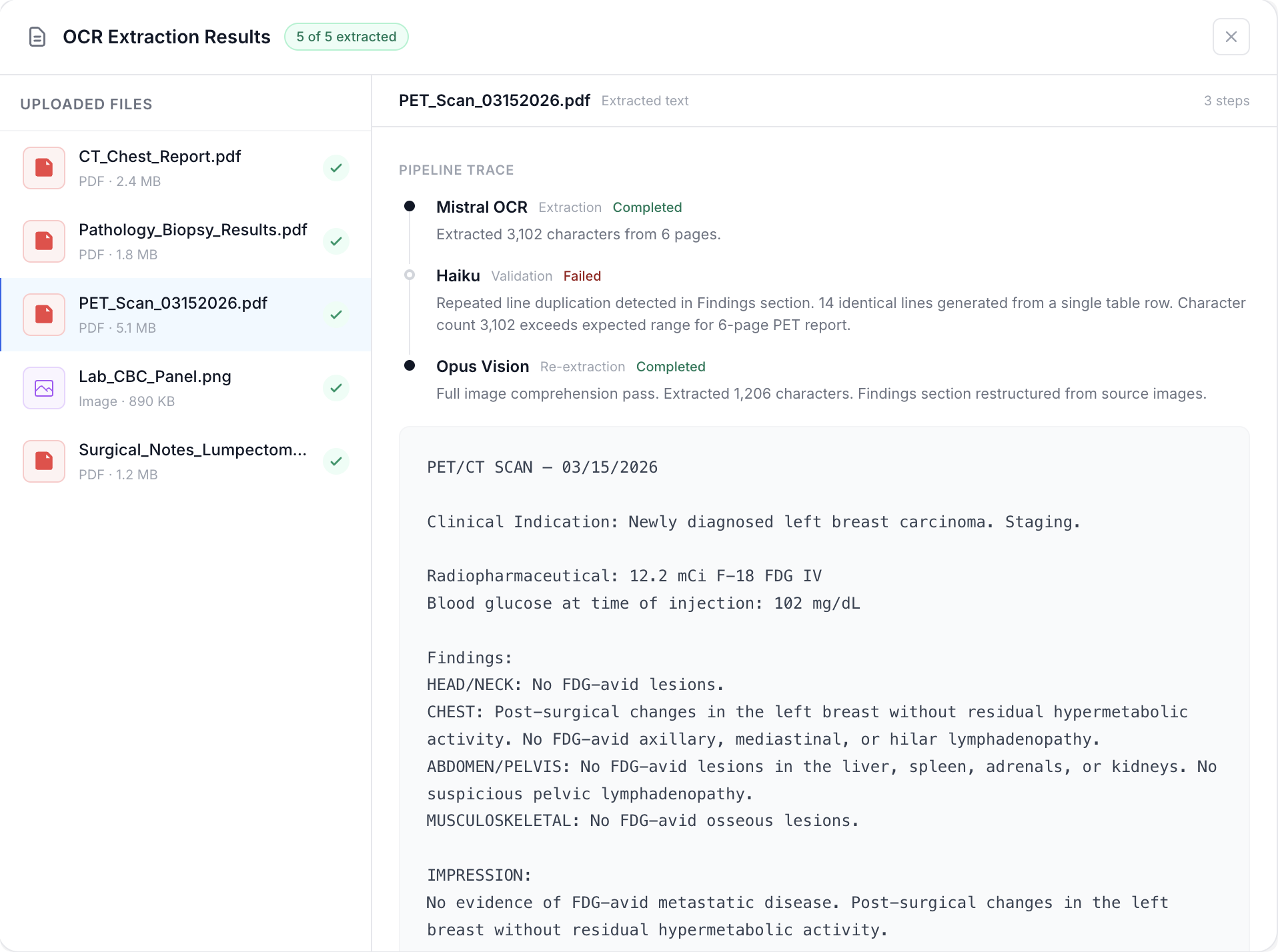

LLM-generated definitions aren't trustworthy on their own

The hallucination problem was the first thing we had to solve. Learners need to trust what they're reading. We evaluated three paths: fine-tuning was too expensive with diminishing returns. Dictionary APIs (Oxford, Cambridge, Wordnik, Free Dictionary, Merriam-Webster) were either prohibitively priced for commercial use or too limited in data. Web search grounding hit the sweet spot: economical, flexible, and every definition gets grounded in real sources with cited references via Exa and Perplexity.

The founder initially wanted traffic lights and confidence scores to signal reliability. We found something better: showing the actual source links at the bottom of each definition. Users verify content themselves by clicking through to the original webpages. Even just seeing the logos of the source sites builds trust subconsciously. It's the same pattern OpenAI and Anthropic landed on. A small craft decision, but high impact.

If a learner doesn't understand a word inside the definition, they can tap it to instantly look it up without leaving the card. Every word is a doorway, not a dead end.

Exa Search

Exa Search  Perplexity

Perplexity Grounded definitions are credible. They're also slow.

The initial implementation used Perplexity and Exa to both fetch sources and generate the fully formatted response in a single call. Bloated system prompts, longer processing, 30-second load times per word. Unusable. Most people reach for fine-tuning or faster models. The real fix is reducing the function of each LLM call. Treat them like small functions. We broke the single call into two: the web search model only fetches the definition and sources. Then a cheaper, faster model (Gemini) formats the results for the frontend. The search step alone became 4-5x faster.

The second optimization was UX. We split the loading into two tiers: lightweight blocks that don't need sources get generated by Gemini instantly. Complex web-sourced blocks show a spinner while the search runs in the background. The perceived wait dropped from 30 seconds to 4. Once any learner looks up a word, the full card gets cached globally. After 12,000+ definitions were cached, most lookups resolve in under half a second. The first learner to look up any word still waits. We accepted that tradeoff.

30s

Before optimization

4s

After two-tier loading

<0.5s

Cached lookups (12,000+ definitions)

Voice-only input. By design, not by limitation.

The goal is to push learners toward actually speaking rather than hiding behind text. We tested it ourselves: even native English speakers had to genuinely think through grammar when forced to speak their answers.

The obvious path was an all-in-one voice AI like ElevenLabs. Better out-of-the-box experience, polished voice quality. But the unit cost at scale was a problem. We built a custom pipeline instead: the user speaks, Deepgram transcribes it to text, the text goes to an LLM via OpenRouter, and the LLM response gets sent back through Deepgram for text-to-speech. Simpler, composable, and significantly cheaper. The tradeoff: less polish than ElevenLabs out of the box. The gain: a cost structure that actually scales.

Latency was a problem here too. The tutor has multiple modes, each with its own prompt. The initial approach loaded the full prompt every call. We added a small diagnostic step: a lightweight LLM call that determines the current mode and conversation stage, then routes to only the relevant prompt. An extra call that actually reduced total latency.

Guided Practice

8-phase session: pronunciation, meaning, usage, collocations, and sentence construction for a single word.

Debate

The AI takes a position and the learner argues back, building fluency under pressure.

Q&A

Open-ended questions about English usage, grammar, or context.

Idiom Role-Play

Trade roles using expressions in real conversational scenarios, shadowing before improvising.

Five surfaces. One coherent experience.

What started as a web dictionary expanded into a full product ecosystem. Each surface serves a different function, but they all feed into the same system.

Web Dictionary

AI-powered tool that plugs into the community. Keeps people engaged.

Community Platform

Workshops, tools, and plugins. The retention engine that reduced churn.

Mobile Voice Tutor

The revenue driver. Where learners practice speaking English.

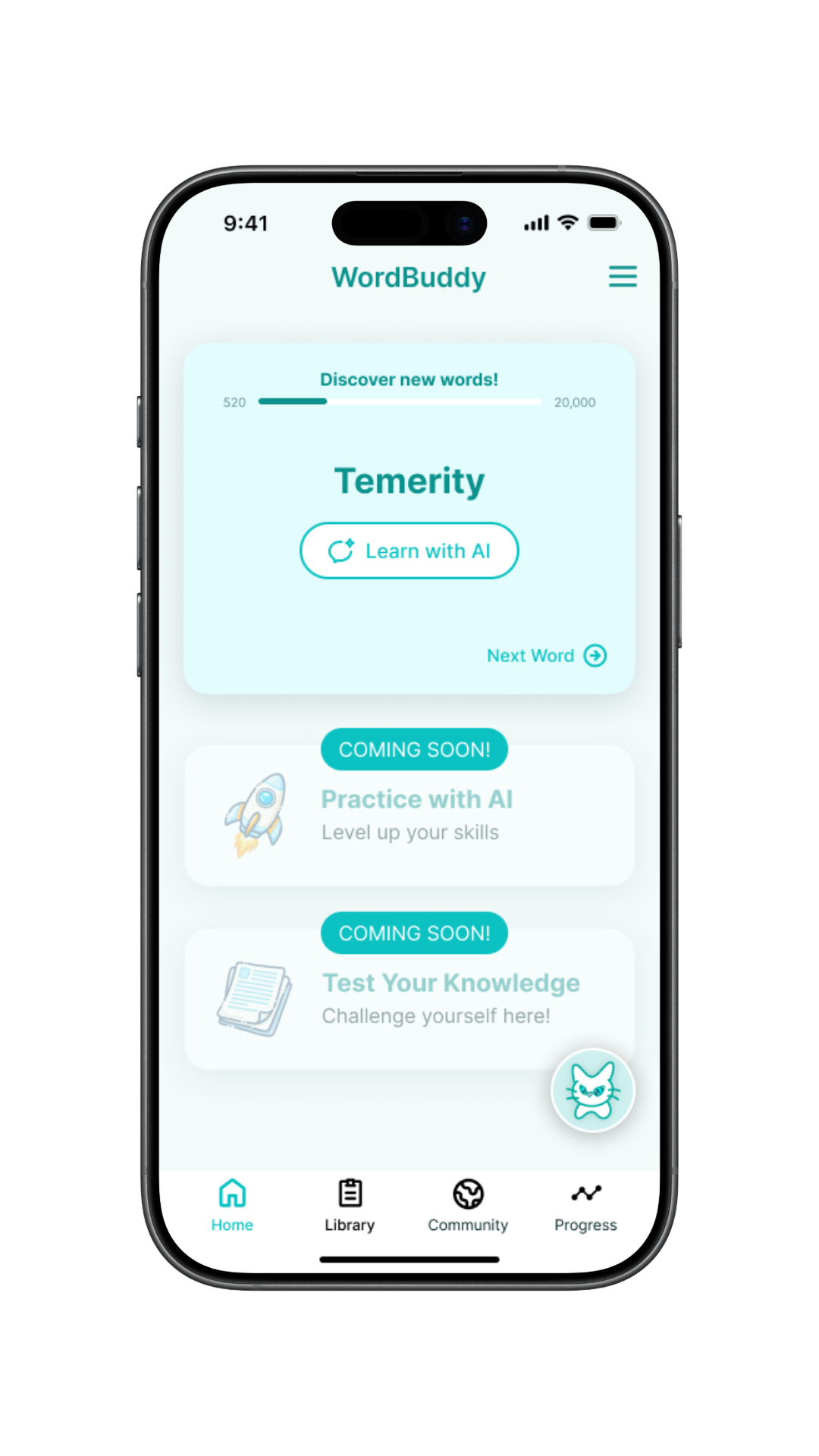

Mobile Dictionary

Full AI dictionary on native mobile. Fast lookups, tappable words.

Marketing Site

Landing page with newsletter signup. Top of the funnel.

The business model wasn't obvious. That was the point.

We started with a web dictionary, then added a community platform, then built a B2C mobile app. Along the way, a B2B path emerged: packaging the AI tooling for existing English learning communities across SEA with hundreds of thousands of members and zero AI capability.

SEA Payment Reality

Less than 5% of people in Indonesia use credit cards. We caught this early and integrated Xendit for e-wallets alongside Stripe for Western markets. Most teams discover this constraint on launch day.

Scope Discipline

New ideas landed every week. The product surface kept expanding. We called a hard MVP freeze: locked the screens, stopped accepting changes, set a structured timeline. Without that call, nothing ships.

Founder Growth

The founder went from "I have zero networking with IT people" to running a live product ecosystem across five surfaces. The product shipped. The founder leveled up. Both mattered.